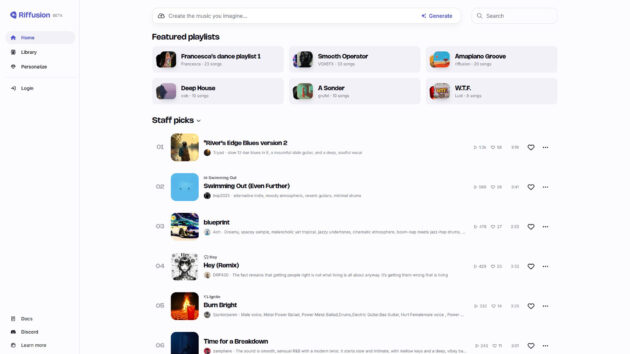

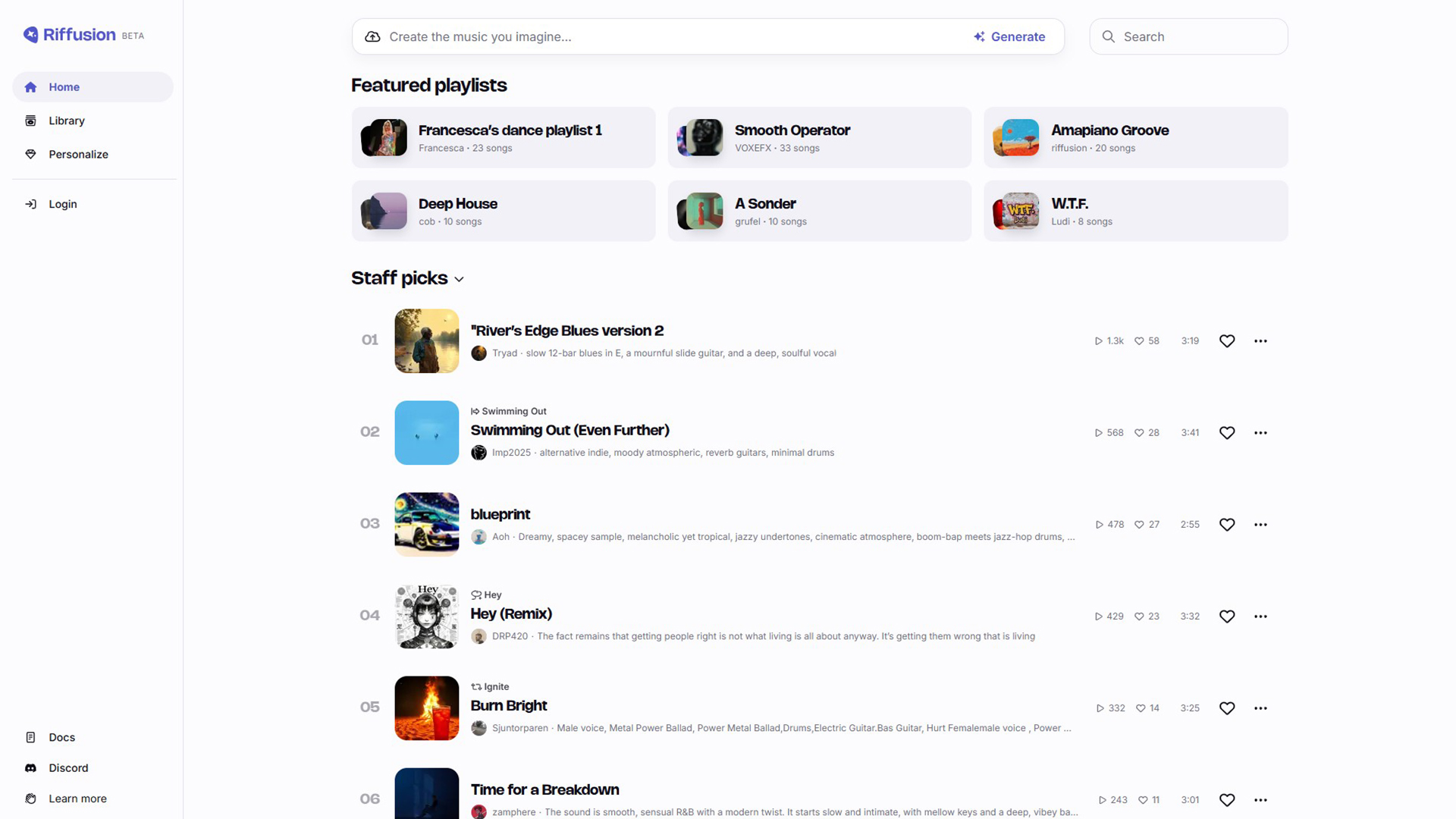

RiffusionAI

Riffusion is an AI-powered music generation tool.

What is Riffusion?

This is an open-source AI model that generates music using stable diffusion. Unlike traditional methods that rely on simple audio samples, it generates music through images of sound, also known as spectrograms. By converting text prompts into these spectrograms, it can then dissect the images and create corresponding audio clips. This allows for the creation of varied musical styles and genres.

Key Features:

- Text-to-music generation

- Uses stable diffusion

- Generates spectrogram images

- Real-time music creation

- Supports varied musical styles

- User-friendly interface

Use Cases of Riffusion:

- Creating music for personal projects

- Generating background scores for videos

- Experimenting with new musical ideas

- Educational purposes in AI and music

- Enhancing creative workflows

Get Started

Create mind-blowing AI-generated music with it! Get Started